David Sathuluri is a Research Associate and Dr. Marco Tedesco is a Lamont Research Professor at the Lamont-Doherty Earth Observatory of Columbia University.

As climate scientists warn that we are approaching irreversible tipping points in the Earth’s climate system, paradoxically the very technologies being deployed to detect these tipping points – often based on AI – are exacerbating the problem, via acceleration of the associated energy consumption.

The UK’s much-celebrated £81-million ($109-million) Forecasting Tipping Points programme involving 27 teams, led by the Advanced Research + Invention Agency (ARIA), represents a contemporary faith in technological salvation – yet it embodies a profound contradiction. The ARIA programme explicitly aims to “harness the laws of physics and artificial intelligence to pick up subtle early warning signs of tipping” through advanced modelling.

We are deploying massive computational infrastructure to warn us of climate collapse while these same systems consume the energy and water resources needed to prevent or mitigate it. We are simultaneously investing in computationally intensive AI systems to monitor whether we will cross irreversible climate tipping points, even as these same AI systems could fuel that transition.

The computational cost of monitoring

Training a single large language model like GPT-3 consumed approximately 1,287 megawatt-hours of electricity, resulting in 552 metric tons of carbon dioxide – equivalent to driving 123 gasoline-powered cars for a year, according to a recent study.

GPT-4 required roughly 50 times more electricity. As the computational power needed for AI continues to double approximately every 100 days, the energy footprint of these systems is not static but is exponentially accelerating.

UN adopts first-ever resolution on AI and environment, but omits lifecycle

And the environmental consequences of AI models extend far beyond electricity usage. Besides massive amounts of electricity (much of which is still fossil-fuel-based), such systems require advanced cooling that consumes enormous quantities of water, and sophisticated infrastructure that must be manufactured, transported, and deployed globally.

The water-energy nexus in climate-vulnerable regions

A single data center can consume up to 5 million gallons of drinking water per day – sufficient to supply thousands of households or farms. In the Phoenix area of the US alone, more than 58 data centers consume an estimated 170 million gallons of drinking water daily for cooling.

The geographical distribution of this infrastructure matters profoundly as data centers requiring high rates of mechanical cooling are disproportionately located in water-stressed and socioeconomically vulnerable regions, particularly in Asia-Pacific and Africa.

At the same time, we are deploying AI-intensive early warning systems to monitor climate tipping points in regions like Greenland, the Arctic, and the Atlantic circulation system – regions already experiencing catastrophic climate impacts. They represent thresholds that, once crossed, could trigger irreversible changes within decades, scientists have warned.

Yet computational models and AI-driven early warning systems operate according to different temporal logics. They promise to provide warnings that enable future action, but they consume energy – and therefore contribute to emissions – in the present.

This is not merely a technical problem to be solved with renewable energy deployment; it reflects a fundamental misalignment between the urgency of climate tipping points and the gradualist assumptions embedded in technological solutions.

The carbon budget concept reveals that there is a cumulative effect on how emissions impact on temperature rise, with significant lags between atmospheric concentration and temperature impact. Every megawatt-hour consumed by AI systems training on climate models today directly reduces the available carbon budget for tomorrow – including the carbon budget available for the energy transition itself.

The governance void

The deeper issue is that governance frameworks for AI development have completely decoupled from carbon budgets and tipping point timescales. UK AI regulation focuses on how much computing power AI systems use, but it does not require developers to ask: is this AI’s carbon footprint small enough to fit within our carbon budget for preventing climate tipping points?

There is no mechanism requiring that AI infrastructure deployment decisions account for the specific carbon budgets associated with preventing different categories of tipping points.

Meanwhile, the energy transition itself – renewable capacity expansion, grid modernization, electrification of transport – requires computation and data management. If we allow unconstrained AI expansion, we risk the perverse outcome in which computing infrastructure consumes the surplus renewable energy that could otherwise accelerate decarbonization, rather than enabling it.

What would it mean to resolve the paradox?

Resolving this paradox requires, for example, moving beyond the assumption that technological solutions can be determined in isolation from carbon constraints. It demands several interventions:

First, any AI-driven climate monitoring system must operate within an explicitly defined carbon budget that directly reflects the tipping-point timescale it aims to detect. If we are attempting to provide warnings about tipping points that could be triggered within 10-20 years, the AI system’s carbon footprint must be evaluated against a corresponding carbon budget for that period.

Second, governance frameworks for AI development must explicitly incorporate climate-tipping point science, establishing threshold restrictions on computational intensity in relation to carbon budgets and renewable energy availability. This is not primarily a “sustainability” question; it is a justice and efficacy question.

Third, alternative models must be prioritized over the current trajectory toward ever-larger models. These should include approaches that integrate human expertise with AI in time-sensitive scenarios, carbon-aware model training, and using specialized processors matched to specific computational tasks rather than relying on universal energy-intensive systems.

The deeper critique

The fundamental issue is that the energy-system tipping point paradox reflects a broader crisis in how wealthy nations approach climate governance. We have faith that innovation and science can solve fundamental contradictions, rather than confronting the structural need to constrain certain forms of energy consumption and wealth accumulation. We would rather invest £81 million in computational systems to detect tipping points than make the political decisions required to prevent them.

The positive tipping point for energy transition exists – renewable energy is now cheaper than fossil fuels, and deployment rates are accelerating. What we lack is not technological capacity but political will to rapidly decarbonize, as well as community participation.

IEA: Slow transition away from fossil fuels would cost over a million energy sector jobs

Deploying energy-intensive AI systems to monitor tipping points while simultaneously failing to deploy available renewable energy represents a kind of technological distraction from the actual political choices required.

The paradox is thus also a warning: in the time remaining before irreversible tipping points are triggered, we must choose between building ever-more sophisticated systems to monitor climate collapse or deploying available resources – capital, energy, expertise, political attention – toward allaying the threat.

The post Using energy-hungry AI to detect climate tipping points is a paradox appeared first on Climate Home News.

Using energy-hungry AI to detect climate tipping points is a paradox

Climate Change

REPORT: The Hidden Risks of Plastic Pouches for Baby Food

It’s been less than 20 years since baby food in plastic pouches first appeared on supermarket shelves. Since then, these convenient and popular “squeeze-and-suck” products have become the dominant packaging for baby food, transforming the way that millions of babies are fed around the world. But emerging evidence raises concerns that big food brands are feeding our children plastic pollution with unknown consequences, by selling baby food in flexible plastic packaging.

Testing commissioned by Greenpeace International in 2025 found plastic particles in the baby food products of two global consumer goods companies – Danone and Nestlé. The study suggests a link between the type of plastic the pouches are lined with – polyethylene – and some of the microplastics found. Tests also suggest a range of plastic-associated chemicals in the packaging and food of both products.

Sign the petition for a strong Global Plastics Treaty

Governments around the world are now negotiating a Global Plastics Treaty – an agreement that could solve the planetary crisis brought by runaway plastic production. Let’s end the age of plastic – sign the petition for a strong Global Plastics Treaty now.

Climate Change

U.N. General Assembly Embraces Court Opinion That Says Nations Have a Legal Obligation to Take Climate Action

The U.S. was among eight countries that voted against endorsing the nonbinding ruling that said all nations must take steps to limit temperature rise to 1.5 degrees Celsius.

The United Nations General Assembly on Wednesday voted overwhelmingly in favor of a climate justice resolution championed by the small Pacific Island nation of Vanuatu. The resolution welcomes the historic advisory opinion on climate change issued by the International Court of Justice in July 2025 and calls upon U.N. member states to act upon the court’s unanimous guidance, which clarified that addressing the climate crisis is not optional but rather is a legal duty under multiple sources of international law.

Climate Change

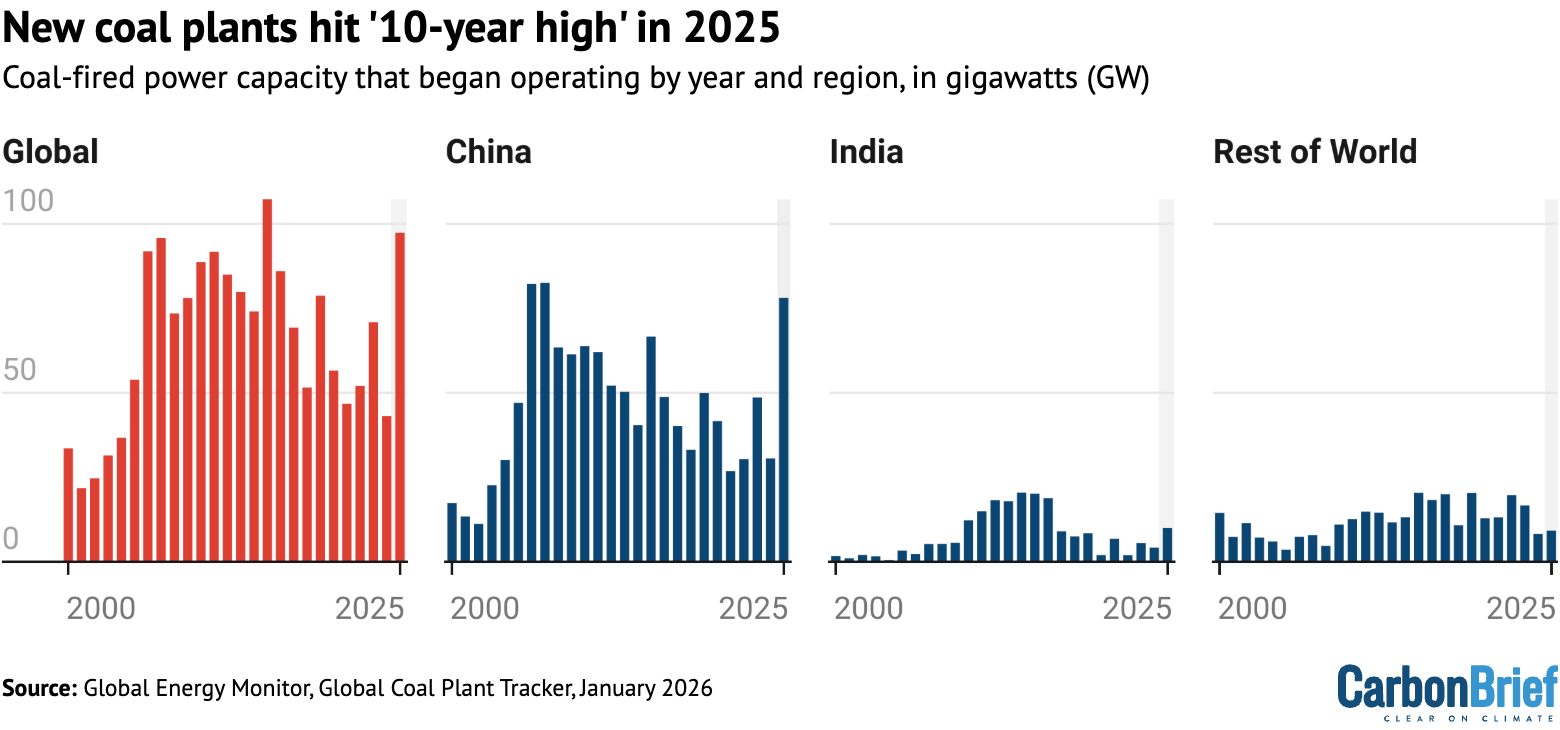

New coal plants hit ‘10-year’ global high in 2025 – but power output still fell

The number of new coal-fired power plants built around the world hit a “10-year high” in 2025, even as the global coal fleet generated less electricity, amid a “widening disconnect” in the sector.

That is according to the latest annual report from Global Energy Monitor (GEM), which finds that the world added nearly 100 gigawatts (GW) of new coal-power capacity in 2025, the equivalent of roughly 100 large coal plants.

It adds that 95% of the new coal plants were built in India and China.

Yet GEM says that the amount of electricity generated with coal fell by 0.6% in 2025 – with sharp drops in both China and India – as the fuel was displaced by record wind and solar output, among other factors.

The report notes that there have been previous dips in output from coal power and there could still be ups – as well as downs – in the near term.

For example, nearly 70% of the coal-fired units scheduled to retire globally in 2025 did not do so, due to postponements triggered by the 2022 energy crisis and policy shifts in the US.

However, GEM says that the underlying dynamics for coal power have now fundamentally shifted, as the cost of renewables has fallen and low usage hits coal profitability.

China and India dominate growth

In 2025, coal-capacity growth hit a 10-year high, with 97 gigawatts (GW) of new power plants being added, according to GEM.

(Capacity refers to the potential maximum power output, as measured in GW, whereas generation refers to power actually generated by the assets over a period of time, measured in gigawatt hours, GWh.)

This is the highest level since 2015 when 107GW began operating, as shown in the chart below. This makes 2025 the second-highest level of additions on record.

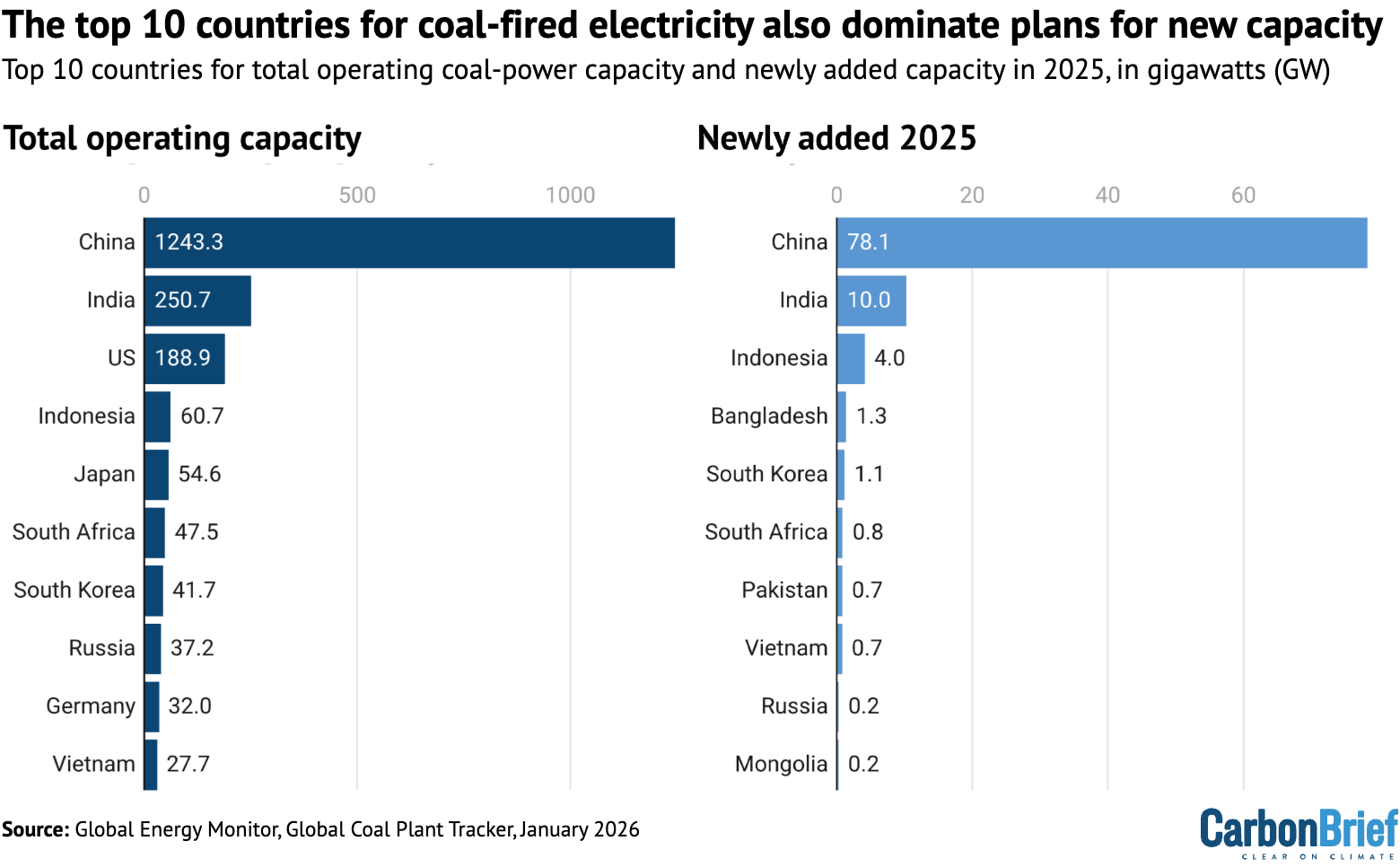

The majority of this growth came from China and India, which added 78GW and 10GW, respectively, against 9GW from all other countries.

Yet GEM points out that, even as coal capacity in China grew by 6%, the output from coal-fired power plants actually fell 1.2%. This means that each power plant would have been running less often, eroding its profitability. Similarly, capacity in India grew by 3.8%, while generation fell by 2.9%.

China and India had accounted for 87% of new coal-power capacity that came into operation in the first half of 2025. The shift up to 95% in the year as a whole highlights how increasingly just those two countries dominate the sector, GEM says.

Christine Shearer, project manager of GEM’s global coal plant tracker, said in a statement:

“In 2025, the world built more coal and used it less. Development has grown more concentrated, too – 95% of coal plant construction is now in China and India, and even they are building solar and wind fast enough to displace it.”

Both China and India saw solar and wind meet most or all of the growth in electricity demand last year.

Analysis for Carbon Brief last year showed that, in the first six months of 2025 alone, a record 212GW of solar was added in China, helping to make it the nation’s single-largest source of clean-power generation, for example.

However, the country continues to propose new coal plants. In 2025, a record 162GW of capacity was newly proposed for development or reactivated, according to GEM. This brought the overall capacity under development in the country to more than 500GW.

China’s 15th “five-year plan”, covering 2026-2030, had pledged to “promote the peaking” of coal use, while a more recent pair of policies introduced stricter controls on local governments’ coal use.

For its part, in India some 28GW of new coal capacity was newly proposed or reactivated last year, bringing the total under development to 107.3GW and under-construction capacity to 23.5GW.

The Indian government is planning to complete 85GW of new coal capacity in the next seven years, even as clean-energy expansion reaches levels that could cover all of the growth in electricity demand.

Outside of China and India, GEM says that just 32 countries have new coal plants under construction or under development, down from 38 in 2024.

Countries that have dropped plans for new coal in 2025 include South Korea, Brazil and Honduras, it says. GEM notes that the latter two mean that Latin America is now free from any new coal-power proposals.

This means that both electricity generation from coal and the construction of new coal-fired power plants are increasingly concentrated in just a few countries, as the chart below shows.

Indonesia’s coal fleet grew by 7% in 2025 to 61GW, with a quarter of the new capacity tied to nickel and aluminium processing, according to GEM.

Turkey – which is gearing up to host the COP31 international climate summit in November – has just one coal-plant proposal remaining, down from 70 in 2015.

The amount of new coal capacity that started to operate in south-east Asia fell for the third year in a row in 2025, according to GEM.

Countries in south Asia that rely on imported energy are increasingly looking to other technologies to protect themselves from fossil-fuel shocks, such as Pakistan, which is rapidly deploying solar, states the GEM report.

In Africa, plans for new coal capacity are concentrated in Zimbabwe and Zambia, the report shows, with the two countries accounting for two-thirds of planned development in the region.

‘Persistence of policies’

While new coal plants are still being built and even more are under development, GEM notes that the global electricity system is undergoing rapid changes.

Crucially, the growth of cheap renewable energy means that new coal plants do not automatically translate into higher electricity generation from coal.

Without rising output from coal power, building new plants simply results in the coal fleet running less often, further eroding its economics relative to wind and solar power.

Indeed, GEM notes that electricity generation from coal fell globally in 2025. Moreover, a recent report by thinktank Ember found that renewable energy overtook coal in 2025 to become the world’s largest source of electricity.

GEM notes that coal generation may fluctuate in the near term, in particular due to potential increases in demand driven by higher gas prices.

It adds that gas price shocks, such as the one triggered by the Iran war, can cause temporary reversals in the longer-term shift away from coal.

According to Carbon Brief analysis, at least eight countries announced plans to either increase their coal use or review plans to transition away from coal in the first month of the Iran war. However, a much-discussed “return to coal” is expected to be limited.

GEM’s report highlights that global fossil-fuel shocks can have an impact on the phase out of coal capacity over several years.

In the EU, for example, 69% of planned retirements did not take place in 2025, due to postponements that began in the 2022-23 energy crisis triggered by the Russian invasion of Ukraine, according to the report. Countries across the bloc chose to retain their coal capacity amid gas supply disruptions and concerns about energy security.

Yet coal-fired power generation in the bloc is now more than 40% below 2022 levels. Again, this highlights that coal capacity does not necessarily translate into electricity generation from coal, with its associated CO2 emissions.

Overall, GEM notes that “repeated exposure to fossil-fuel price volatility is as likely to accelerate the shift toward clean energy as it is to delay it”.

GEM’s Shearer says in a statement:

“The central challenge heading into 2026 is not the availability of alternatives, but the persistence of policies that treat coal as necessary even as power systems move increasingly beyond it.”

In the US, 59% of planned retirements in 2025 did not happen, according to GEM. This was due to government intervention to keep ageing coal plants online.

Five coal-power plants have been told to remain online through federal “emergency” orders, for example, even as the coal fleet continues to face declining competitiveness.

Keeping these plants online has cost hundreds of millions of dollars and helped drive an annual increase in the average US household electricity prices of 7%, according to GEM.

Despite such measures, Trump has overseen a larger fall in coal-fired power capacity than any other US president, according to Carbon Brief analysis.

Meanwhile, according to new figures from the US Energy Information Administration, solar and wind both set new records for energy production in 2025.

Despite challenges with policy and wider fossil-fuel impacts, the underlying dynamic has shifted, says GEM, as “clean energy becomes more competitive and widely deployed” around the world.

It adds that this raises the prospect of “a more sustained decoupling between coal-capacity growth and generation, particularly if clean-energy deployment continues at current rates”.

The post New coal plants hit ‘10-year’ global high in 2025 – but power output still fell appeared first on Carbon Brief.

New coal plants hit ‘10-year’ global high in 2025 – but power output still fell

-

Climate Change9 months ago

Guest post: Why China is still building new coal – and when it might stop

-

Greenhouse Gases9 months ago

Guest post: Why China is still building new coal – and when it might stop

-

Greenhouse Gases2 years ago

Greenhouse Gases2 years ago嘉宾来稿:满足中国增长的用电需求 光伏加储能“比新建煤电更实惠”

-

Climate Change2 years ago

Climate Change2 years ago嘉宾来稿:满足中国增长的用电需求 光伏加储能“比新建煤电更实惠”

-

Climate Change2 years ago

Bill Discounting Climate Change in Florida’s Energy Policy Awaits DeSantis’ Approval

-

Renewable Energy7 months ago

Renewable Energy7 months agoSending Progressive Philanthropist George Soros to Prison?

-

Carbon Footprint2 years ago

Carbon Footprint2 years agoUS SEC’s Climate Disclosure Rules Spur Renewed Interest in Carbon Credits

-

Greenhouse Gases10 months ago

嘉宾来稿:探究火山喷发如何影响气候预测