A reality without AI is beyond comprehension! AI is a powerful tool that transforms resource-intensive industries, products, and services by offering data-based suggestions and making smart decisions. As clean tech continues to evolve, the integration of artificial intelligence (AI) will be crucial to driving further advancements.

AI and Microchips: Driving the Clean Tech Revolution

AI and microchips are transforming renewable energy. AI makes processes faster and more efficient, boosting clean energy innovation. Microchips, crucial for AI and data centers, are key to this progress.

In clean energy, these chips enable smarter trading, improve forecasts for wind and solar power, and enhance safety and efficiency.

Machine learning has been used in clean tech for years to monitor wind farms and detect faults. However, applying AI in energy trading was slower. Now, advances in generative AI are changing that. They optimize power markets and improve renewable energy management.

Furthermore, top companies are heavily investing in clean technology, using AI to transform the sector. For instance, Google, Microsoft, and Meta are applying AI in clean energy projects to enhance efficiency and sustainability.

Battery makers like CATL and Tesla are also on board. They use AI to boost battery performance, improve energy storage, and streamline operations. Meanwhile, NVIDIA, the leading chipmaker, is focused on creating advanced AI chips for clean tech.

Together, these companies are revolutionizing technology. They are making renewable energy systems smarter, more efficient, and ready for a sustainable future.

AI-Driven Grid Solutions for Clean Energy

Grid Enhancing Technologies (GETs) play a vital role in optimizing power transmission. These systems help improve the integration of clean energy while reducing the need for costly infrastructure expansions. GETs use a mix of hardware, like sensors and data analytics software to make grids more efficient and adaptable.

So why are they important?

- GETs reduce grid congestion by preventing bottlenecks in energy flow.

- They help manage peak loads by handling sudden spikes in energy demand.

- GETs improve planning by enhancing the accuracy of day-ahead energy forecasts.

- They reroute power effectively during outages or maintenance to ensure energy delivery.

How AI Boosts GETs

AI, especially ML is transforming how GETs operate. AI analyzes data in a fraction of time and improves the performance of grid-enhancing technologies.

Real-Time Data

ML uses real-time weather data to adjust transmission line thermal ratings. This improves grid efficiency and capacity to handle more renewable energy without adding new infrastructure. AI also processes different kinds of grid data, like impedance and voltage angles, at high speed. This optimizes power flow, reduces congestion, and boosts efficiency.

Customer Energy Consumption

AI plays a crucial role in understanding customer energy consumption. It accurately predicts energy needs and leverages advanced tools like generative adversarial networks (GANs) to generate synthetic data. These capabilities enhance forecasting accuracy, energy management, and grid reliability.

Supervisory Control and Data Acquisition (SCADA)

Systems like Supervisory Control and Data Acquisition (SCADA) also benefit. AI makes SCADA more accurate and responsive, providing real-time grid performance data that helps operators make better decisions.

As renewable energy grows, smarter grid solutions are essential. In short, GETs, powered by AI, tackle challenges like congestion, peak loads, and clean energy integration.

Supporting Smarter Grid Investments

The rise of renewable energy requires stronger grid infrastructure. AI helps identify weak points in the grid and suggests where investments are most needed. This prevents curtailments and ensures a smoother transition to clean energy systems.

By supporting grid flexibility, AI makes infrastructure investments smarter and more effective. It predicts challenges and optimizes resource allocation, ensuring the grid is ready for the growing share of renewables.

Efficient Wind and Solar Energy Management with AI

Wind energy depends on weather- which is an unpredictable force of nature. So the energy output is also inconsistent. AI solves this problem with weather analyzing tools and historical data for accurate energy forecasts. These forecasts help operators plan better and reduce energy waste.

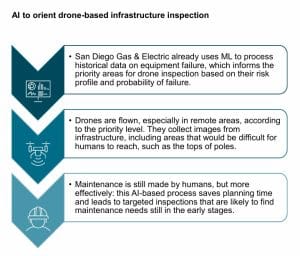

AI also enhances wind farm operations through predictive maintenance. Sensors collect real-time data to identify potential issues early.

- For example, AI detects yaw system misalignments that reduce turbine output or gearbox problems from unusual vibrations.

- It eliminates the need for manual pitch inspections by spotting blade alignment issues automatically.

With AI-driven insights, wind farms run efficiently which further minimizes downtime and maximizes energy production. Here’s a snapshot of it.

Solar energy relies on consistent performance, but challenges like shading, dust, and equipment issues can reduce output. Traditional systems often miss early warning signs, as inverters have limited processing capabilities.

AI-based monitoring offers a better solution. By analyzing vast amounts of data quickly, it detects small performance issues that inverters might overlook. This enables real-time adjustments and faster maintenance.

Subsequently, distributed solar systems connecting to low- or medium-voltage grids also benefit from AI. It optimizes energy flow and establishes a uniform distribution of solar power across decentralized networks. By tackling these challenges, AI helps solar systems deliver reliable, clean energy while reducing operational delays.

AI’s Role in Battery Management Systems

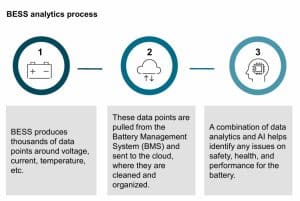

Measuring the state of charge (SOC) in lithium-iron-phosphate (LFP) battery cells is challenging. These problems and inaccuracies are mostly associated with traditional battery management systems (BMS), that majorly impact battery performance.

But AI provides a better solution to this problem. It uses data analytics and machine learning to spot safety, health, and performance issues. This leads to more accurate SOC predictions. As a result, less downtime is needed for BMS recalibration, thereby maximizing efficiency and revenue.

The process, however, is complex. For instance, AI-based SOC estimation employs the Single Extended Kalman Filter algorithm. This algorithm estimates SOC by calculating the battery’s open-circuit voltage. Machine learning then fine-tunes the Kalman filter for improved accuracy.

Data Complexities in Clean Tech AI

AI offers powerful solutions for clean technology but comes with challenges. Training AI algorithms requires vast amounts of data, which demands advanced data management systems. Therefore, clean tech industries must collect, store, and analyze massive data sets while protecting sensitive information through robust privacy measures.

Similarly, ethical concerns also need much attention. AI systems must prioritize fairness, transparency, and accountability. Clear guidelines are crucial to avoid biases, respect privacy, and ensure clean tech benefits reach all communities equally.

Thus, from this report, we can comprehend how AI is transforming clean energy with smarter tools that improve forecasting, maintenance, and efficiency. As innovations continue to emerge, we can expect AI to crawl more rapidly in clean tech which is driving the future of renewable energy.

The post AI and Clean Tech: A Revolution in Renewable Realms appeared first on Carbon Credits.

Carbon Footprint

The real cost of 1 tonne of CO2: Translating carbon into hectares

Every business carbon footprint report ends with a number, the amount of carbon emissions produced by the business, less the amount of carbon reduced and offset, given in tonnes of CO₂. Many of the people who sign off on that number, including those who paid for it, cannot picture what it represents on the ground. A tonne is a unit of mass. CO₂ is invisible. The link between the amount offset in the report and a real piece of restored forest somewhere in the world is almost never indicated.

![]()

Carbon Footprint

Finding Nature Based Solutions in Your Supply Chain

Carbon Footprint

How Climate Change Is Raising the Cost of Living

Americans are paying more for insurance, electricity, taxes, and home repairs every year. What many people may not realize is that climate change is already one of the drivers behind those rising costs.

For many households, climate change is no longer just an environmental issue. It is becoming a cost-of-living issue. While climate impacts like melting glaciers and shrinking polar ice can feel distant from everyday life, the financial effects are already showing up in monthly budgets across the country.

Today, a larger share of household income is consumed by fixed costs such as housing, insurance, utilities, and healthcare. (3) Climate change and climate inaction are adding pressure to many of those expenses through higher disaster recovery costs, rising energy demand, infrastructure repairs, and increased insurance risk.

The goal of this article is to help connect climate change to the everyday financial realities people already experience. Regardless of where someone stands on climate policy, it is important to recognize that climate change is already increasing costs for households, businesses, and taxpayers across the United States.

More conservative estimates indicate that the average household has experienced an increase of about $400 per year from observed climate change, while less conservative estimates suggest an increase of $900.(1) Those in more disaster-prone regions of the country face disproportionate costs, with some households experiencing climate-related costs averaging $1,300 per year.(1) Another study found that climate adaptation costs driven by climate change have already consumed over 3% of personal income in the U.S. since 2015.(9) By the end of the century, housing units could spend an additional $5,600 on adaptation costs.(1)

Whether we realize it or not, Americans are already paying for climate change through higher insurance premiums, energy costs, taxes, and infrastructure repairs. These growing expenses are often referred to as climate adaptation costs.

Without meaningful climate action, these costs are expected to continue rising. Choosing not to invest in climate action is also choosing to spend more on climate adaptation.

Here are a few ways climate change is already increasing the cost of living:

- Higher insurance costs from more frequent and severe storms

- Higher energy use during longer and hotter summers

- Higher electricity rates tied to storm recovery and grid upgrades

- Higher government spending and taxpayer-funded disaster recovery costs

The real debate is not whether climate change costs money. Americans are already paying for it. The question is where we want those costs to go. Should we invest more in climate action to help reduce future climate adaptation costs, or continue paying growing recovery and adaptation expenses in everyday life?

How Climate Change Is Increasing Insurance Costs

There is one industry that closely tracks the financial impact of natural disasters: insurance. Insurance companies are focused on assessing risk, estimating damages, and collecting enough revenue to cover losses and remain financially stable.

Comparing the 20-year periods 1980–1999 and 2000–2019, climate-related disasters increased 83% globally from 3,656 events to 6,681 events. The average time between billion-dollar disasters dropped from 82 days during the 1980s to 16 days during the last 10 years, and in 2025 the average time between disasters fell to just 10 days. (6)

According to the reinsurance firm Munich Re, total economic losses from natural disasters in 2024 exceeded $320 billion globally, nearly 40% higher than the decade-long annual average. Average annual inflation-adjusted costs more than quadrupled from $22.6 billion per year in the 1980s to $102 billion per year in the 2010s. Costs increased further to an average of $153.2 billion annually during 2020–2024, representing another 50% increase over the 2010s. (6)

In the United States, billion-dollar weather and climate disasters have also increased significantly. The average number of billion-dollar disasters per year has grown from roughly three annually during the 1980s to 19 annually over the last decade. In 2023 and 2024, the U.S. recorded 28 and 27 billion-dollar disasters respectively, both setting new records. (6)

The growing impact of climate change is one reason insurance costs continue to rise. “There are two things that drive insurance loss costs, which is the frequency of events and how much they cost,” said Robert Passmore, assistant vice president of personal lines at the Property Casualty Insurers Association of America. “So, as these events become more frequent, that’s definitely going to have an impact.” (8)

After adjusting for inflation, insurance costs have steadily increased over time. From 2000 to 2020, insurance costs consistently grew faster than the Consumer Price Index due to rising rebuilding costs and weather-related losses.(3) Between 2020 and 2023 alone, the average home insurance premium increased from $75 to $360 due to climate change impacts, with disaster-prone regions experiencing especially steep increases.(1) Since 2015, homeowners in some regions affected by more extreme weather have seen home insurance costs increased by nearly 57%.(1) Some insurers have also limited or stopped offering coverage in high-risk areas.(7)

For many families, rising insurance costs are no longer occasional financial burdens. They are becoming recurring monthly expenses tied directly to growing climate risk.

How Rising Temperatures Increase Household Energy Costs

The financial impacts of climate change extend beyond insurance. Rising temperatures are also changing how much energy Americans use and how utilities plan for future electricity demand.

Between 1950 and 2010, per capita electricity use increased 10-fold, though usage has flattened or slightly declined since 2012 due to more efficient appliances and LED lighting. (3) A significant share of increased energy demand comes from cooling needs associated with higher temperatures.

Over the last 20 years, the United States has experienced increasing Cooling Degree Days (CDD) and decreasing Heating Degree Days (HDD). Nearly all counties have become warmer over the past three decades, with some areas experiencing several hundred additional cooling degree days, equivalent to roughly one additional degree of warmth on most days. (1) This trend reflects a warming climate where air conditioning demand is increasing while heating demand generally declines. (4)

As temperatures continue rising, households are expected to spend more on cooling than they save on heating. The U.S. Energy Information Administration (EIA) projects that by 2050, national Heating Degree Days will be 11% lower while Cooling Degree Days will be 28% higher than 2021 levels. Cooling demand is projected to rise 2.5 times faster than heating demand declines. (5)

These projections come from energy and infrastructure experts planning for future electricity demand and grid capacity needs. Utilities and grid operators are already preparing for higher peak summer electricity loads caused by rising temperatures. (5)

Longer and hotter summers also affect how homes and buildings are designed. Buildings constructed for past climate conditions may require upgrades such as larger air conditioning systems, stronger insulation, and improved ventilation to remain comfortable and energy efficient in the future. (10)

For many households, this means higher monthly utility bills and potentially higher long-term home improvement costs as temperatures continue to rise.

How Climate Change Affects Electricity Rates

On an inflation-adjusted basis, average U.S. residential electricity rates are slightly lower today than they were 50 years ago. (2) However, climate-related damage to utility infrastructure is creating new upward pressure on electricity costs.

Electric utilities rely heavily on above-ground poles, wires, transformers, and substations that can be damaged by hurricanes, storms, floods, and wildfires. Repairing and upgrading this infrastructure often requires substantial investment.

As a result, utilities are increasing electricity rates in response to wildfire and hurricane events to fund infrastructure repairs and future mitigation efforts. (1) The average cumulative increase in per-household electricity expenditures due to climate-related price changes is approximately $30. (1)

While this increase may appear modest today, utility costs are expected to rise further as climate-related infrastructure damage becomes more frequent and severe.

How Climate Disasters Increase Government Spending and Taxes

Extreme weather events also damage public infrastructure, including roads, schools, bridges, airports, water systems, and emergency services infrastructure. Recovery and rebuilding costs are often funded through taxpayer dollars at the federal, state, and local levels.

The average annual government cost tied to climate-related disaster recovery is estimated at nearly $142 per household. (1) States that frequently experience hurricanes, wildfires, tornadoes, or flooding can face even higher public recovery costs.

These expenses affect taxpayers whether they personally experience a disaster or not. Climate-related recovery spending can increase pressure on public budgets, emergency management systems, and infrastructure funding nationwide.

Reducing Climate Costs Through Climate Action

While this article focuses on the growing financial costs associated with climate change, the issue is not only about money for many people. It is also about recognizing our environmental impact and taking responsibility for reducing it in order to help preserve a healthy planet for future generations.

While individuals alone cannot solve climate change, collective action can help reduce future climate adaptation costs over time.

For those interested in taking action, there are three important steps:

- Estimate your carbon footprint to better understand the emissions connected to your lifestyle and activities.

- Create a plan to gradually reduce emissions through energy efficiency, cleaner technologies, and more sustainable choices.

- Address remaining emissions by supporting verified carbon reduction projects through carbon credits.

Carbon credits are one of the most cost-effective tools available for climate action because they help fund projects that generate verified emission reductions at scale. Supporting global emission reduction efforts can help reduce the long-term impacts and costs associated with climate change.

Visit Terrapass to learn more about carbon footprints, carbon credits, and climate action solutions.

The post How Climate Change Is Raising the Cost of Living appeared first on Terrapass.

-

Climate Change10 months ago

Guest post: Why China is still building new coal – and when it might stop

-

Greenhouse Gases10 months ago

Guest post: Why China is still building new coal – and when it might stop

-

Greenhouse Gases2 years ago

Greenhouse Gases2 years ago嘉宾来稿:满足中国增长的用电需求 光伏加储能“比新建煤电更实惠”

-

Climate Change2 years ago

Climate Change2 years ago嘉宾来稿:满足中国增长的用电需求 光伏加储能“比新建煤电更实惠”

-

Climate Change2 years ago

Bill Discounting Climate Change in Florida’s Energy Policy Awaits DeSantis’ Approval

-

Renewable Energy8 months ago

Renewable Energy8 months agoSending Progressive Philanthropist George Soros to Prison?

-

Carbon Footprint2 years ago

Carbon Footprint2 years agoUS SEC’s Climate Disclosure Rules Spur Renewed Interest in Carbon Credits

-

Greenhouse Gases11 months ago

嘉宾来稿:探究火山喷发如何影响气候预测